The “word of the year” for 2023 was “Enshittification” – as defined by author Cory Doctorow:

“Here is how platforms die: first, they are good to their users; then they abuse their users to make things better for their business customers; finally, they abuse those business customers to claw back all the value for themselves. Then, they die.”.

Similar concepts have been written about previously.

An Australian dictionary summarized it nicely in 2024 as “The gradual deterioration of a service or product brought about by a reduction in the quality of service provided, especially of an online platform, and as a consequence of profit-seeking.”

There are numerous variants of enshittification which we’ve all observed, usually concerned with improving revenue streams for the service provider at the expense of the quality of the service or organizations failing in some way.

· Changing the business model of the service because the original premise isn’t sustainable

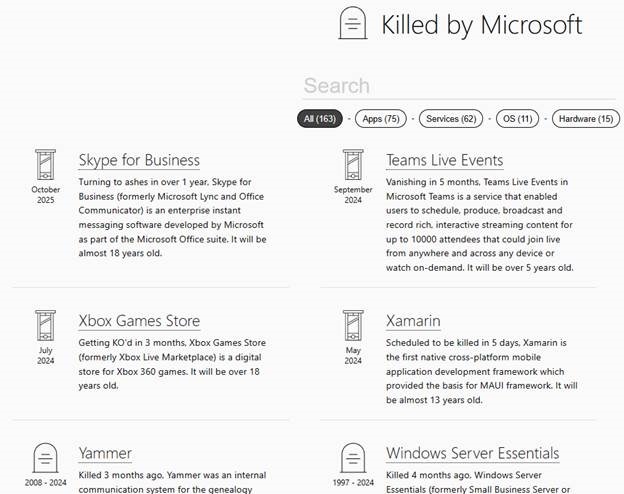

· Killing products or removing features which cost too much to provide

· Failure to adapt with technology, stifling innovation, leading to stagnation and irrelevance

· Decline of a service or community due to poor leadership, user behaviour or rise of another

· Trapping customers, making it inordinately difficult to cancel or migrate from the service

Sometimes these moves are long planned – capture the market by operating at a loss then pay back your investors later by reaping the rewards of early market advantage, potentially even turning the screws on your customers (see Amazon, Netflix). Companies might be overaggressive competitors, looking to quash alternatives (Amazon, Microsoft), and it’s just a fact of life that some things don’t work and walking away from them angers or disappoints customers who used them (see Google, Microsoft, many others).

2025 In

This year is barely 20% over but we’ve already seen numerous changes to popular online services. Netflix is cranking up subscription pricing again (among others); Microsoft has added Copilot features to Personal and Family plans, jacking the cost up significantly to pay for it. Spotify has been teasing a lossless service for years, but might get around to launching it this summer. Hands up who thinks it will be an extra cost over the standard tier?

Even if a service provider puts out notice that they’re going to make some degrading change (or if, as WindowsForum.com does about all the upcoming Microsoft cuts, others collect the news and report it), it can still feel like a shock when you notice it’s not there any more. Microsoft calls it “deprecation”.

As mentioned in ToW #62, there are lots of occasions where a feature changes very much for the worse (from a user’s perspective) but there’s nothing much you can do about it other than seek an alternative.

Search caching

One relatively quiet change that happened in both Google and Bing during 2024 was the removal of cached pages in search results. This was a handy way to find a web page which, for whatever reason, wasn’t online any more … though could be used to find out how a page looked before some recent change. “Link Rot” means that lots of pages link to sites that have disappeared.

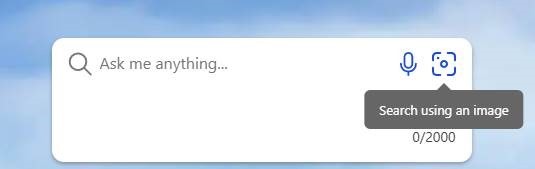

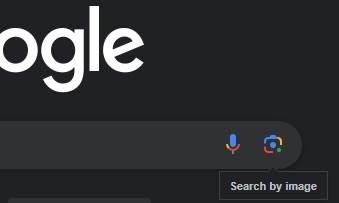

Both Google and Bing used to have cached copies of pages that could be viewed by clicking an icon next to the item in search results.

Google discontinued it without notice in February 2024, so people who noticed would turn to Bing, Yahoo or Baidu as they all still offered the cached feature. The reasons for removal? “It was meant for helping people access pages when way back, you often couldn’t depend on a page loading. These days, things have greatly improved. So, it was decided to retire it.”

Bing followed suit in December, saying, “This week, we’ve removed cache links from Bing search results. As the internet has evolved for better reliability, and many pages aren’t optimized for cache viewing.”

Both reasons smack of “we’re doing this because it makes your life simpler and the feature wasn’t needed any more anyway”, but in reality there will be cost savings and potentially legislative reasons too. Why offer the service if you can’t monetize it? What’s next?

Google has since wired in a link to the Internet Archive – a free, useful resource though sometimes a bit slow and not always complete – if you click the “:” to the side of a search result, then click through to “More about this page ->”.

Turn to specific addins

One of the use cases for looking at cached results is to see how something was previously described before it was updated; or maybe to see how much something was being advertised for, previously? Have you ever seen a product marked as “SOLD” and wondered what it had priced at before?

It may be worth looking at the various extensions / app stores to see if there’s an enterprise developer who’s built something that might help. One such is the excellent AT Price Tracker, for the UK Autotrader website.

Who’d want to be trying to sell luxury 3-ton EV-SUVs at the moment?

AT Price Tracker will show a summary of what the same advert has been listed at previously; traders could remove it entirely and re-post to fox the logic of the app, but it’s presumably under the radar enough for most not to even notice it.

Unless Autotrader decides to get some enshittification in and block whatever access the addin has.

![clip_image001[6] clip_image001[6]](/wp-content/uploads/2022/07/clip_image0016_thumb.png)

![clip_image003[4] clip_image003[4]](/wp-content/uploads/2022/07/clip_image0034_thumb.png)

![clip_image005[4] clip_image005[4]](/wp-content/uploads/2022/07/clip_image0054_thumb.png)

![clip_image007[4] clip_image007[4]](/wp-content/uploads/2022/07/clip_image0074_thumb.png)

![clip_image009[4] clip_image009[4]](/wp-content/uploads/2022/07/clip_image0094_thumb.png)

![clip_image011[4] clip_image011[4]](/wp-content/uploads/2022/07/clip_image0114_thumb.png)

![clip_image013[4] clip_image013[4]](/wp-content/uploads/2022/07/clip_image0134_thumb.png)

![clip_image015[4] clip_image015[4]](/wp-content/uploads/2022/07/clip_image0154_thumb.png)